Updated: Comment #169

This is being split into separate issues to make changes easier to review.

- This is being coded in the D8MI - Entity Translation sandbox.

- Test issue: #2298525: Test issue for Make ContentEntityDatabaseStorage handle changes in the entity schema definition.

Problem/Motivation

In #2183231: Make ContentEntityDatabaseStorage generate static database schemas for content entities we introduced the capability for the entity storage to automatically generate the SQL schema for any entity type. This is currently limited to static generation at install time and concerns only the tables described in the official entity storage SQL table layout standard. That is the ones storing values for base fields. At the moment dynamically handling changes in the entity type and field definitions, and reflecting them in the SQL table layout, is not supported.

This is a critical missing piece towards achieving a whole set of improvements:

- Modules are no longer required to inject random data into the

$entityobject during the load phase, since all the data retrieval is handled by the storage itself. This will motivate module authors to define their data through field definitions, instead of using random properties, with all the related benefits, such as automatic Rules integration, typed-data validation and so on. - Any entity type leveraging the core entity storage API, natively supports revision and translation without requiring module authors any additional effort.

- Translation and revision support can be independently enabled/disabled by just altering the entity type definition. This makes it easy for site developers to adopt the table layout that is the most performant with respect to their business requirements.

- Additional base fields can easily be added to the native entity table layout, making it straightforward to implement highly-efficient denormalization strategies.

- The API is designed without having a particular storage backend in mind. This means module authors can leverage a storage-agnostic API without any additional effort required.

Proposed resolution

Part 1: unified API

- Extend the content entity schema handler implemented in #2183231: Make ContentEntityDatabaseStorage generate static database schemas for content entities to be able to handle the addition/removal/update of field storage definitions, transparently handling differences between single-valued base fields, which are stored in the entity tables, and bundle fields or multiple-value base fields, which instead are stored in dedicated tables.

- Make the content entity schema handler able to handle changes in the entity type definition for the revisionable and translatable properties.

- Move the Field SQL Storage (private) API to the (default) table mapping implementation, which is the SQL specific part of the core entity storage API, responsible for representing how fields are mapped to tables.

Part 2: UI and data handling

- Implement a new

EntitySchemaManagerservice responsible for spotting differences between the installed schema and the one generated from the current versions of the entity type and field storage definitions. Probably leveraging state to track changes. - Based on the current Field Storage API limitations, implement a set of validation rules so that only changes that do not imply a data migration are applied to the schema when data exists, that is:

- adding a field table

- adding a custom field column to a base/data/revision table

switching from revisionable to non-revisionableswitching from translatable to non-translatable

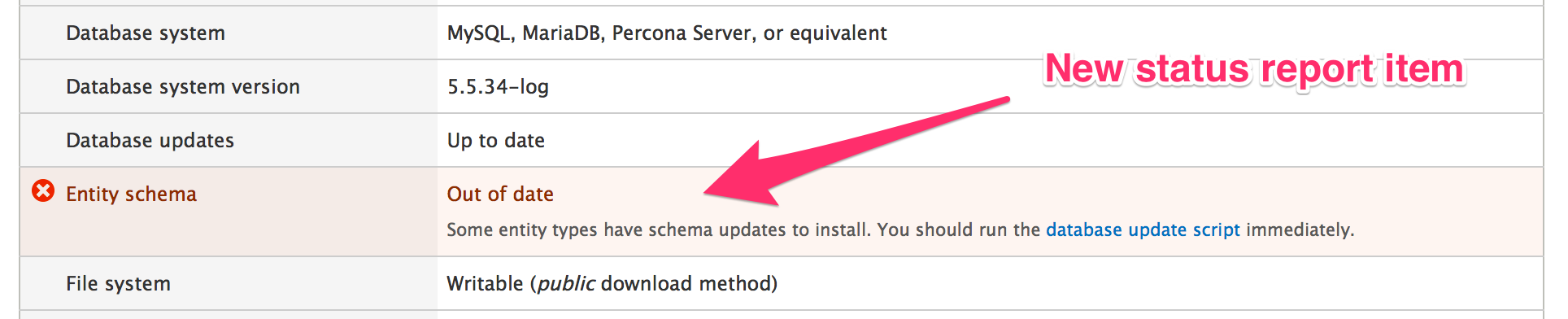

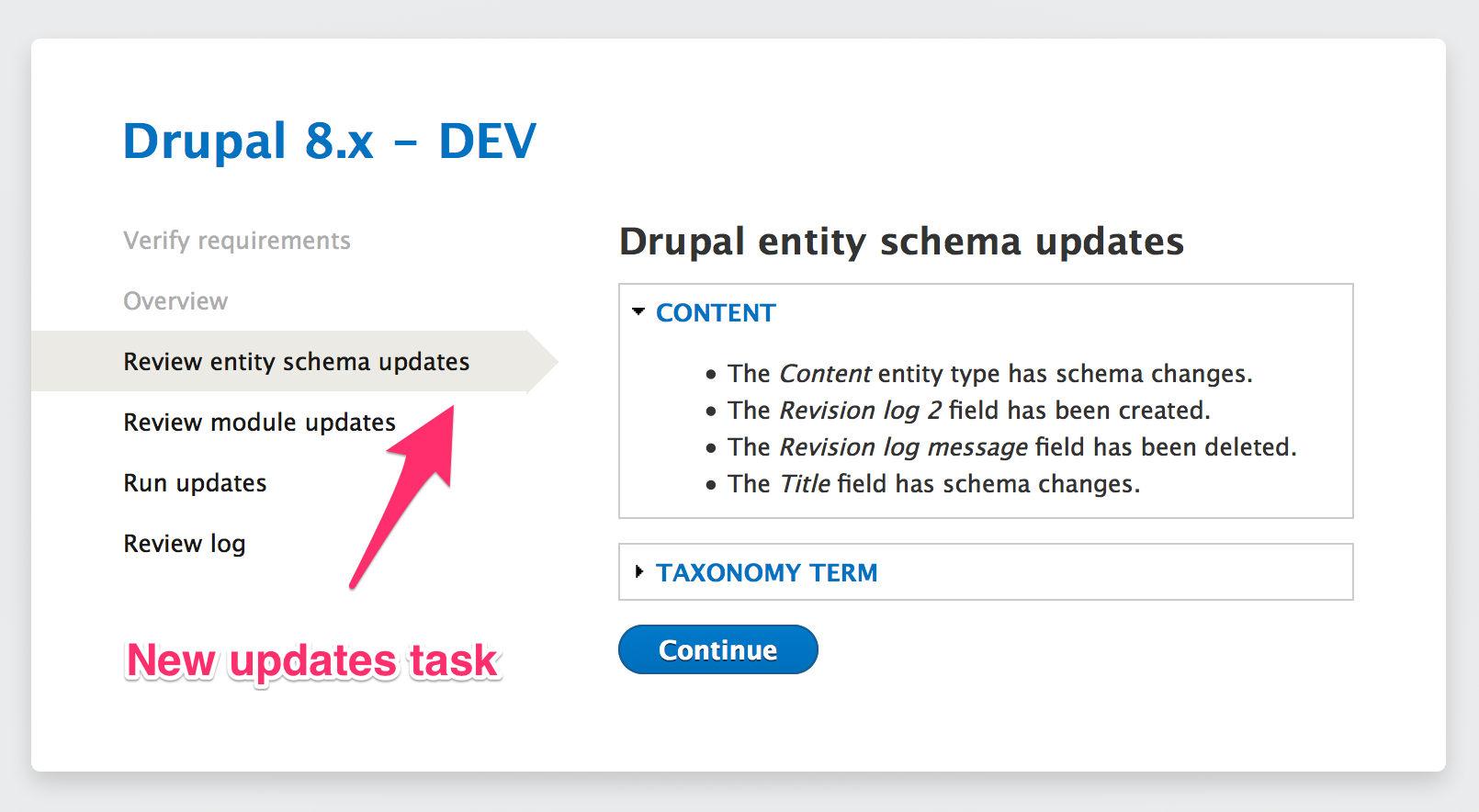

- Any change not (yet) reflected in the installed schema is reported as an error in the status report, which offers a link to the

update.phpscript to fix the database schema. Theupdate.phpscript calls the entity schema manager to trigger any allowed schema change.

See #48 for a detailed description of the implementation plan: it's a bit outdated but many details are still valid.

Remaining tasks

- #2326719: Move pseudo-private table mapping functions from ContentEntityDatabaseStorage to public API of DefaultTableMapping

- #2326981: Move \Drupal\field\FieldException to \Drupal\Core\Field\FieldException

- #2326949: Move entity-type-specific schema information from the storage class to a schema handler

- #2330121: Replace ContentEntityDatabaseStorage::getSchema() with a new EntityTypeListenerInterface implemented by SqlContentEntityStorageSchema

- #2333113: Add an EntityDefinitionUpdateManager so that entity handlers can respond (e.g., by updating db schema) to code updates in a controlled way (e.g., from update.php)

- #2337927: SqlContentEntityStorage::onFieldStorageDefinition(Create|Update|Delete)() is broken for base fields

Follow ups

- #2282119: Make the Entity Field API handle field purging

- #2248977: Complete support for multi-value base fields in ContentEntitySchemaHandler and use it for the user.roles field

- #1740492: Implement a default entity views data handler

- #2079019: Make Views use SqlEntityStorageInterface

User interface changes

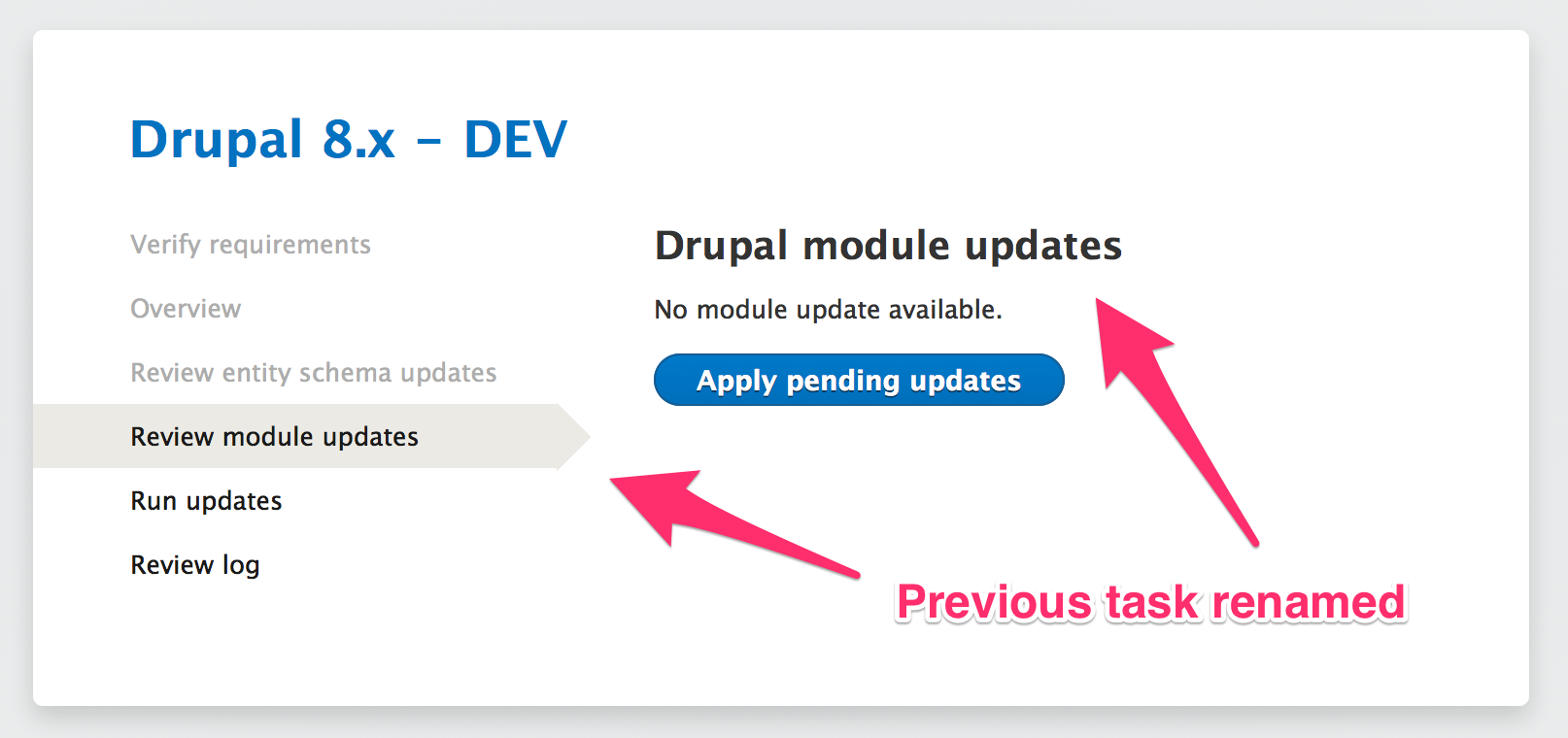

- Entries added to the status report page.

- Minor tweaks to the

update.phpUI.

API changes

- All the Entity Field CRUD API has moved to the entity schema handler.

- All the Field SQL storage (private) API has been moved to the default table mapping.

| Comment | File | Size | Author |

|---|---|---|---|

| #169 | et-entity_schema_handling-1498720-169-review-do-not-test.patch | 232.57 KB | effulgentsia |

| #164 | et-entity_schema_handling-1498720-164.patch | 319.9 KB | plach |

| #164 | et-entity_schema_handling-1498720-164.interdiff.txt | 9.76 KB | plach |

| #151 | et-entity_schema_handling-1498720-151.patch | 299.37 KB | plach |

Comments

Comment #1

plachWe need at least one translation schema created to go on with this.

Comment #2

plachComment #3

catchUn-postponing.

Comment #3.0

plachUpdated issue summary.

Comment #3.1

plachUpdated issue summary.

Comment #3.2

plachUpdated issue summary.

Comment #4

das-peter commentedHere's a first POC of how I could imagine this could be done.

Comment #5

das-peter commentedSummary of discussion in IRC with fago:

hook_schema().schema_settingsby information from constrains and new data types where necessary.Comment #6

das-peter commentedHere's a next attempt.

This is quite hard because at the point when

hook_schema()is invoked the entity information aren't yet updated. Thus we can't access the storage handler.I don't know if we can or should change the installation order.

Looks doable, however there are some pain-points:

schema_settings. Downside is that this has no effect on the validation stuff.Comment #7

damien tournoud commentedIndexes are going to be a whole world of pain.

Isn't this basically just #1416558: hook_entity_info(), hook_schema(), and the field system are strongly bound to each other? One way of solving this as part of this patch is to remove the whole

'schema_fields_sql'thingie. We don't need that anymore if we enforce that the schema is generated directly from the field definitions.Comment #8

yched commentedJust FYI :

Yeah, exposing dynamic schemas for field_sql_storage tables in it's hook_schema(), based on field definitions stored in ConfigEntities, has been a absolute can of worms in #1735118: Convert Field API to CMI.

Current patch does it by reading directly from the underyling config() files, skipping entity_load() - entity_load() within hook_schema() is a road to hell currently.

But : there has been discussions as to whether field_sql_storage really needs to expose its field data tables to hook_schema() to begin with. That's a *lot* of information, that isn't used anywhere in practice.

The people I asked so far (@chx, @fago, @effulgentsia) were all of the opinion that we probably shouldn't bother.

The pros & cons might be different when it comes to entity base tables, dunno for sure, but the two (base table, field data tables) should probably be treated the same ?

Comment #9

damien tournoud commentedThis information has actually been very important in the past (in Drupal 7, for example, ctools is able to create relationship context automatically from the foreign keys). If tomorrow the SQL schema doesn't bring more information then the TypedData schema, of course we don't necessarily need to bother.

Comment #10

berdirIn regards to default values, see #1777956: Provide a way to define default values for entity fields, but I'm not sure if we actually want to define that on the schema because the defaults could be dynamic I guess and you're not supposed to create database records yourself anyway.

Comment #11

damien tournoud commentedYes, I think it's fine not to define defaults on the SQL layer.

Comment #12

yched commentedRight - though double checking, ctools does use drupal_schema() to get the foreign keys on entity types base tables, but reads foreign keys on field data tables through hook_field_schema(), not drupal_schema() / hook_schema().

Asked for feedback on "do we want to expose schemas of dynamic field data tables in hook_schema() ?" in #1735118-181: Convert Field API to CMI.

Comment #12.0

yched commentedUpdated issue summary.

Comment #13

plachComment #13.0

plachUpdate issue summary

Comment #14

yesct commentedwhile discussing #2057401: Make the node entity database schema sensible in irc, was pointed here while I was figuring out how the baseFieldDefinitions() in Node related to node_schema in node.install and the DatabaseStorageController and the EntityManager. (tagging d8dx since this might help with that confusion, maybe another tag would be better)

updated the issue summary.

Comment #14.0

yesct commentedsummarized, and used template.

Comment #14.1

yesct commentedoops. space.

Comment #15

amateescu commented#2144327: Make all field types provide a schema() is a prerequisite for this.

Comment #16

effulgentsia commentedWhat I like about this issue is that if you, say, swap in a MongoDb controller, then you don't need pointless tables in the MySQL db. However:

If I'm reading that right, then that means a different MySQL controller (say, one that wants to make menu link titles multilingual) would generate a different table. So, then, what happens when a table is made with one controller, and then a different controller is swapped in? Is the new controller responsible for altering the tables from any arbitrary schema into the one that it needs?

Comment #17

amateescu commentedMy initial reaction would be to say that we can't (or shouldn't?) support swapping controllers if the current tables are not empty..

Comment #18

plachYep, a contrib module switching-in a different storage controller might try to write a migration to move data around, but I think that in the core context we should assume an empty storage. I was planning to write all the code here in the assumption there is no entity data yet. We can try to write a migration to switch between the table layouts supported by core but that would totally be a follow-up issue (and might also be contrib material).

Comment #19

plachThis is at least major as (together with #2144263: Decouple entity field storage from configurable fields) it will allow us to implement the unified storage discussed in Prague.

Comment #20

gábor hojtsyCritical as per #2047633-116: Move definition of menu links to hook_menu_link_defaults(), decouple key name from path, and make 'parent' explicit and following comments.

Comment #21

effulgentsia commentedGiven that catch approved this issue being critical in the issue linked in #20, and that this is clearly data model/schema related, I'm tagging it "beta blocker" despite not having explicit approval to do so. Someone can correct me if they disagree.

Comment #22

plachComment #23

chx commented> since all the data retrieval is handled by the controller itself. This may allow us to kill hook_entity_load() and hook_ENTITY_TYPE_load() altogether.

Erm. Nope? What if two modules want to add data? Both can't replace the controller.

Comment #24

plachWell, the original idea was that everything would be a field, so any addition would be loaded by the storage controller, which would allow for fields to specify a custom storage engine if needed (see the "Unify Field Storage" section of the related Prague Notes). Computed data would just be computed fields. That said, I am no longer sure this would extinguish the need for

hook_entity_load()(or an equivalent event) so removing it in this stage of D8 development would probably be a bad idea.Comment #25

plachTo clarify: we were not proposing to support per-field storage again, just the ability to specify a different table layout than the default one.

Comment #26

Sylvain Lecoy commentedDoctrine #1817778: Consider using Doctrine ORM for Entities has this feature already, plus it supports schema version (e.g. adding or removing fields, changing the nature of the relationship, etc.).

Comment #27

plachThat's a D9 issue, is it?

Comment #28

Sylvain Lecoy commentedYes it is, for the record it was originally against drupal8, then postponed.

As I see this issue postponed too now, it might be interesting to no re-invent the wheel if Doctrine (like Symfony2 folks use) is chosen.

I am pretty sure drupal will use an ORM in the end and provide tools to integrates these ORM just like it has become the Java standard with Spring/Hibernate.

Just look at how symfony guys did, it will become the common standard in php in a few years.

Comment #29

Sylvain Lecoy commentedThen every body will be amazed by ORM and DataModel visualisation and modeling through graphical IDE and @nnotations but this is a feature we had since 10 years in Java.

We'll also be able to remove the need for 'EntityInterface' and every developers will thank us for this, ORM taking the responsibility of creating tables, but also maintaining them, by deleting, updating and creating relationships (through foreign keys or not) as the conceptual data model evolves in the code by annotations.

We are not ready for this when I pledged in the issue this will be our future, but one day we'll have no choice. We don't want to rewrite code that has been tested for years, which is performant, and widely accepted as common pattern in other technologies such as persistent web servers like J2EE.

Comment #30

plachI agree this option should be seriously took into consideration in the future, but I fail to see how we could do such a big change in this phase of D8 life-cycle, honestly.

Comment #31

Sylvain Lecoy commentedSorry for my intrusion in the conversation, just wanted to tell you I am really excited about this field, and wanted to point you to some work in progress for drupal 9. I have also a working copy (generates entity on drupal 7 from Schema API, not yet Field API) here: https://drupal.org/project/doctrine.

Please continue to your original idea, for now its almost impossible to introduce such a big change, that's what I've been told by people in 2012 for D8 so i'm not surprised to hear the same thing now in 2014 :D You got a point.

Comment #32

plachWell, it's great that you are already exploring this idea: by the time it can be seriously considered for core inclusion, we will have some real-life data to evaluate :)

Comment #33

andypostThere's no issues blocking this

Comment #34

plachActually #2068325: [META] Convert entity SQL queries to the Entity Query API is more or less a prerequisite. However I am planning to start working on this in a few days.

Comment #35

plachComment #36

plachComment #37

plachComment #38

tstoecklerRe #34: Hey there, I recently started some initial work which I just posted in #2183231: Make ContentEntityDatabaseStorage generate static database schemas for content entities. Maybe we can coordinate efforts.

Comment #39

plachIt looks like a duplicate to me :(

Comment #40

tstoecklerWe can certainly continue here but the patch over there is not really an a reviewable state. I posted in only because I wanted to *avoid* any duplication if you wanted to get started on this soon per #34. The only patch in this issue was very much outdated so I don't think I duplicated much work?!

Comment #41

plachNo problem for the patch, but I think keeping these two issues open would be misleading. Also, it would be good to discuss the approach before diving into coding: there are some non-trivial aspects to figure out.

Btw, did you have a look to the Prague notes ("Unify Field storage" section)?

Comment #42

andypostThis needs to unify field and data type plugins in #2150511: [meta] Deduplicate the set of available field types

For example

node.titledefines itself as 'text' but have no 'format' associated.Comment #43

sunComment #44

tstoecklerPosted a new, now working patch in #2183231: Make ContentEntityDatabaseStorage generate static database schemas for content entities. I kept this as a separate issue for now, as in theory we could handle the actual schema generation there, but still install the schema manually. This has turned out to be a pretty massive issue on its own. Then once that is in place, at least partly, we can figure out the dynamic creation/deletion here. Do you think that makes sense @plach?

Comment #45

tstoeckleralso, it's now called FieldableDatabaseStorageController

DatabaseStorageController is the legacy one that's onely being used by menu link now.

Comment #46

andypostComment #47

plachI've been sketching an implementation plan in the last couple of days. I should be able to post it soon for review...

Comment #48

plachAside from the aspects already laid out in Prague , below there is a description of how I'd implement this.

Goals

Implementation overview

In the current proposal there is a new entity controller/handler (an instance of

EntitySchemaHandlerInterface, actual names TBD), that is responsible for performing all the schema-related operations. The schema handler:Additionally a service (implementing

EntitySchemaBuilderInterface) is used to trigger the actual actions performed by the schema handler. The core implementation is owned by the Entity module, which implementshook_rebuild()to react the proper way when entity and field info are regenerated. This is the main (only?) core way to rebuild/reinstall the entity schema definition. Contrib modules could swap in a more advanced schema builder, that could provide an actual UI to trigger schema changes, show schema version diffs and start data migrations. The core schema builder refuses to install a new schema version if data is available and the required action cannot be performed. Instead it reports the inconsistency in Drupal's status report as an error.Entity schema is initially generated and installed during the Drupal installation process. We need to check whether switching storage controller is possible during installation, so we do not need to always install the default SQL schema before swapping it out. This is definitely something we should try to avoid, as users are created during the installation process, so any alternative storage controller would need to provide a migration and do some magic to move users off their SQL table.

The entity schema definition is stored through the State service. The stored data includes:

Default SQL implementation details

The core

SqlSchemaHandler(as well as the related storage controller) supports 4 table layouts, depending on whether the entity type supports revisions, translations, none or both. When building the schema definition it loops through the field definitions and assigns each field to a "base" table ({entity},{entity_revision},{entity_field_data},{entity_field_revision}) or a field table. The actual column schema definitions are picked from the field schema. By default indexes are generated based on entity keys and field schema definitions, but every entity type can provide its own specialized version of the schema handler to optimize index definitions (or any other part of it). I think we should add a newrevisionableproperty on field definitions: this would allow the schema handler to avoid storing unnecessary/unwanted data in the revision tables.The storage controller applies the same logic to tell in which table to store each field, so it does not need to rely on the fully generated schema. This is not necessarily a big gain as field schema definitions might be needed to perform the actual queries. However one of the goals is trying to get rid of this requirement.

Entity data handling

As I said above, the core schema handler supports performing only "data-safe" changes. This means that when data is available only the following schema changes are allowed:

We probably need some kind of confirmation step before performing an operation that would imply data loss (dropping columns or tables). For instance this status might trigger a warning in the status report with a link to a confirmation form.

Any other change, mainly switching from a simpler table layout to a more complex one (e.g. from non-revisionable to revisionable) requires a data migration and as such is not supported. Any attempt to trigger such an action causes an exception to be thrown.

Views support and (legal) raw SQL access

Views is the most obvious example of a module legally needing to perform SQL queries directly. In D8 this would be discouraged but Views offers a vast set of SQL-specific features that would be impossible to implement otherwise. The point is that Views' flexible architecture allows for pluggable query engines: there is nothing wrong in having a SQL-specific engine, as long as it is possible to provide other ones for alternative storages. However the fact that we support swappable entity storages implies that Views cannot rely on a fixed table layout (not even assuming the core entity storage), hence we need a way for it to generate entity (views) data automatically based on entity/field definitions (see #1740492: Implement a default entity views data handler). The ways this issue could help with that are:

SqlStorageInterface::getTableMapping($field_name)(see also #2079019: Make Views use SqlEntityStorageInterface):The data above + the entity field definitions should allow to implement a base entity views data controller.

Comments welcome :)

Comment #49

andypostAlso this code could be re-used to generate "table-per-entity type" for comment, comment statistics and history tables that all have entity_id and int but fieldable entities could have ID as string, the approach suggested by @catch in #2081585-79: [META] Modernize the History module

Comment #50

plachIf I understand correctly, those could be defined as custom fields. In that case, yes, they would get dedicated per-entity-type tables.

Comment #51

berdirThe problem with that is also what @catch commented in #2205215: {comment} and {comment_entity_statistics} only support integer entity ids. We have no API to interact with single fields, only entities. So making them field storage, all changes would need to save the whole entity.

The only thing we could do is to optimize internally that to only update field tables that have changed, but that's it.

Comment #52

fagoI'm not sure it makes sense to have a separate controller/handler for taking care of schema changes. How or whether you have to deal with schema changes is highly storage dependent, so I'd see this as the job of the entity storage. If it's about pluggability, I'd agree that it makes sense to separate that out - either in a service injected into the storage controller, or similarly using a composite pattern as I've already suggested while moving field storage to entity storage.

That said, shouldn't be

FieldableEntityStorageControllerInterfaceenough of an interface for handling schema changes? It needs to be generalized to work with entity field interfaces (for which we need to create aFieldStorageInterface, as part of #2116363: Unified repository of field definitions (cache + API) or as separate issue) - see #2144263: Decouple entity field storage from configurable fields. As we can generate our schema based on the field definitions now, having the changed field definitions should be enough to allow any storage to perform any necessary change actions.That said, imo the API should not be about schema changes, but about field definition changes.

For that, I think a good first step would be to get going with adding necessary information to our metadata, i.e. #2143069: Add getProvider() and hasCustomStorage to FieldDefinitionInterface and the revisionable flag, this should be the easy/quick wins. Then make sure we've

FieldStorageInterfaceand generalizeFieldableEntityStorageControllerInterface. Then, make sure stuff depending on the tables has a way to lookup tablenames (= add an extended interface for SQL storage engines: #2079019: Make Views use SqlEntityStorageInterface). Then finally we need to test and verify that stuff does not break.One issue we need to solve is how we'd generate field definition changes for module provided fields. Either we store previous definition versions and do it automatically, or we'd have to require developers to write suiting update functions (should be ok I think and is less auto-magic).

Finally, a one of the bigger tasks would be to make the schema generation for base tables the job of the storage controllers. A quick first step could be moving code and responsibility, while basing the base table schema on the field schema is probably more tricky - issue: #2183231: Make ContentEntityDatabaseStorage generate static database schemas for content entities.

That's how I'd see, but let's discuss asap, nail down steps and get the ball rolling. Maybe let's discuss asap in szeged.

Comment #53

fagoComment #54

plachGiven #52 I guess it's probably better to discuss this once again in Szeged before starting the actual work.

Just a quick answer: the main reason why I'd go for a separate class to handle entity schema is that it may involve quite an amount of code that we don't need to load on most requests. Obviously it would make little sense to swap the storage controller without swapping the schema handler too, so yes I see them strictly coupled in terms of actual usefulness. They would have two quite distinct responsibilities though, so having two classes would be correct IMHO.

Comment #55

Crell commentedJust because schema changes live in a separate object doesn't mean they have to be part of a global subsystem that all storage drivers have to implement. This is another reason all of these objects should just be in the container, not coupled together via the annotation by class name.

It's completely legit for the entity storage handler for SQL to depend on 4 other objects, including the database service, in order to do what it needs to do. Meanwhile, the MongoDB handler can only depend on 2, neither of which map conceptually to any of the 4 sub-objects that the SQL handler uses. That's not only OK, that's a good architecture if that detail is hidden behind the storage handler's interface in ways that the calling code doesn't give a damn about.

Architect globally, implement locally. :-)

Comment #56

tstoecklerI agree with everything that's been said so far. I also agree it makes sense to put this logic into a separate class and inject that as a service, I will refactor #2183231: Make ContentEntityDatabaseStorage generate static database schemas for content entities accordingly.

For now I will keep that issue in its limited scope, i.e. I will not account for any schema changes. I will also not create the schema only on demand but will hardcode the schema creation into ModuleHandlerInterface::install(). We will need to work out how we store the information which tables have been created with which state in a separate issue. I think that is a non-trivial problem on its own.

Something like a field-definition-update API that #52 talks about sounds pretty sweet. I was not aware of #2116363: Unified repository of field definitions (cache + API), I will read up on that.

Comment #57

plachDiscussed this again with @fago, @tstockler and @berdir. We decided to split this in smaller tasks. We are currently working on:

#2183231: Make ContentEntityDatabaseStorage generate static database schemas for content entities

#2143069: Add getProvider() and hasCustomStorage to FieldDefinitionInterface

@Crell:

We agreed to introduce the schema builder as a service: my only concern was the inability to have a different class per entity-type, if needed. @fago and @berdir pointed out that we can simply inject a different service, so that works for me :)

Comment #58

plachComment #59

fagoAnother dependency: #2144631: Add a revisionable key to field definitions

Comment #60

Crell commentedMake it a service, or make it a required service that every entity type or storage handler must define?

The former is what I was saying we should do. :-) The latter I'm saying is unnecessary, or if it's necessary then it's a sign of a deeper design flaw.

Comment #61

plachI'd say the former: every storage controller can define its own list of dependencies, and I don't think we are hardcoding the need for the schema builder anywhere in the public API...

Comment #62

dave reid/me has no idea how this would affect or work for File Entity in contrib which "enhances" the file entity type with bundle information, which core doesn't even have the schema to hold.

Comment #63

plach@Dave Reid:

I think this should let you obtain the same result by just defining a new bundle field, which would be then automatically added to the schema. It may also let you add the other stuff that is currently defined in

file_entity_schema(), if you define them as additional custom fields. Not sure whether that would make sense actually, I didn't study code closely.Comment #64

jessebeach commentedHas this issue become a META for the following or is it really postponed on them?

#2183231: Make ContentEntityDatabaseStorage generate static database schemas for content entities

#2143069: Add getProvider() and hasCustomStorage to FieldDefinitionInterface

#2144631: Add a revisionable key to field definitions

Comment #65

plachFor now we are planning to do some of the work described in #48 directly here.

Comment #66

jessebeach commentedComment #67

fagoOpened an issue for the field storage interface dependency: #2226197: Introduce FieldStorageDefinitionInterface in the Entity Field API.

Comment #68

plachComment #69

plachComment #70

plachComment #71

plachComment #72

jessebeach commentedComment #73

Anonymous (not verified) commentedI just found this issue. OMG I will be so happy when this will be in core!!!!

Comment #74

plachThe actual schema generation is addressed in #2183231: Make ContentEntityDatabaseStorage generate static database schemas for content entities. We will provide a unified API to track schema changes in #2144263: Decouple entity field storage from configurable fields. Here we will have to react to any change in the entity schema definition and handle it properly.

Comment #75

Sylvain Lecoy commentedYou might be interested in looking at the reverse procedure: e.g. building an entity object from Schema API: http://drupalcode.org/project/doctrine.git/blob/48b52566638b3e31e3e0ebff...

If you have any questions i'll be glad to help :)

Comment #76

dave reidNow that #2183231: Make ContentEntityDatabaseStorage generate static database schemas for content entities landed, I think this is now a major blocker for porting File entity to D8.

Comment #77

tstoecklerComment #78

xjmThanks @davereid; that's important to know.

@tstoeckler: I think this issue was also still postponed on #2144263: Decouple entity field storage from configurable fields? Or is it possible to work on them at the same time?

Comment #79

tstoecklerHmm... I would have thought "Yes", but let's let @plach decide, as he has concrete plans for this issue, I think. Marking back to postponed for now.

Comment #80

effulgentsia commentedJust chatted with @fago and @plach, and they say that while this issue won't be finishable/committable until after #2144263: Decouple entity field storage from configurable fields is done, it can still be started in parallel. Leaving postponed for now, until someone begins that work.

Comment #81

plachI will do tomorrow

Comment #82

fagoComment #83

fagoupdated the issue summary based on a call with plach and timplunkett

Comment #84

plachComment #85

plachComment #86

fagoAs discussed with yched, alexpott, mtift, plach and xjm (notes here) we'll have to move the field purging mechanism to the entity field API and keep deleted field definitions in state for being able to properly solve this. However, we can postpone that to a follow-up and just forbid uninstalling modules providing fields as long as the fields have data for now.

-> Thus, we'll have to forbid uninstalling modules providing fields as long as the fields have data as part of this issue.

Comment #87

fagoopened #2282119: Make the Entity Field API handle field purging

Comment #88

plachA first patch, not really ready for review yet, to see how many failures we have. This includes #2144263: Decouple entity field storage from configurable fields as it depends on it.

Comment #90

plachSome fixes

Comment #92

plachThis should fix quite a few test failures.

Comment #94

plachMore fixes

Comment #95

plachGreen, cool.

As I was saying this is not ready for review yet, unless you just want to have a look to how the new API to handle field schema changes looks like. Still lot to do, but we have a good foundation now.

Comment #96

plachOk, this should be more serious: it implements schema handling for shared table fields.

There is still room for a lot of clean-up and field data purging is not handled (it will in #2282119: Make the Entity Field API handle field purging), but aside from additional test coverage this first step should not be too far from completion.

Next step is automatically detecting and applying changes. I will start working in a separate branch based on the current one, so we can decide whether split that part off in a separate non beta-blocking issue or whether it makes more sense to have them together.

The full patch is for the bot and includes #2144263: Decouple entity field storage from configurable fields, the one to review is, well, the

.reviewone :)Comment #98

plachOops

Comment #100

plachThe last changes exposed this nice bug

Comment #101

plachRerolled after #2144263: Decouple entity field storage from configurable fields (yay!)

Comment #102

plachAnd no longer postponed!

Comment #103

alexpottRead through the patch - just get on terms with what is going - the patch attached has some minor clean up and shows that we have a lack of test coverage around ContentEntityDatabaseStorage::doDeleteFieldItems(), ContentEntitySchemaHandler::getSharedTableFieldSchema() and ContentEntitySchemaHandler::getDedicatedTableSchema(). The last two are using FieldException - the patch in 101 didn't include a use statement for this class. And ContentEntityDatabaseStorage::doDeleteFieldItems() was not touching the revision table which looks necessary.

Comment #105

Anonymous (not verified) commentedNope nope nope nope nope nope nope . Why would you do that? That has nothing to do with schema. It's an event trigger and what modules do with it is not Drupal's concern.

Comment #106

plach@alexpott:

Sorry, I was going on with my work in the sandbox (see also #2298525: Test issue for Make ContentEntityDatabaseStorage handle changes in the entity schema definition), I hope I will be able to include your interdiff without merging issues :)

@ivanjaros:

That's no longer on the table (see #24), I will update the issue summary when I have a more stable patch.

Comment #107

alexpottFix tests. I got getEntityTypeId and getTargetEntityTypeId mixed up!

Comment #108

dawehnerSomeone will have to update the issue summary, it is kinda outdated.

One general question: People did not wanted to expose the table name / column name as API, but I guess it is unavoidable here.

Comment #109

plachI will soon, I'm almost done with the first part (at least we should be close to a reviewable patch), see #2298525: Test issue for Make ContentEntityDatabaseStorage handle changes in the entity schema definition.

Yep, I am afraid it is. The route I took here was "hiding" it in the table mapping, so it's available only if you have a storage class that implements

SqlEntityStorageInterface.Comment #110

fagoI shortly reviewed the patch, without going into all the details for now. Overall I like how code has been refactored and moved around!

Here some remarks:

This naming and description confused me a bit - what does it mean to mark a schema as deleted? Schema does not support something like that ;)

Maybe, it should be just prepareFieldSchemaDeletion()?

Why does the one allow it and the other one require it. Cannot it just be "hasXTableStorage()" or usesXTableStorage()?

Also, the logic seems a bit duplicated, maybe one could check the other, i.e. !customSTorage + !shared table -> dedicated table?

Can it rely on having SQL storage here? Also on various other places - it seems to be a pre-existing assumption though. I guess we should ensure it does at least not FATAL on non-sql storages, besides providing a neat way to provide views integration still. Stuff for another issue.

Comment #111

plachThanks @fago, but that patch is very outdated, I am working in #2298525: Test issue for Make ContentEntityDatabaseStorage handle changes in the entity schema definition. I will see if I can incorporate your feedback there.

Comment #112

yesct commentedadding the more widely used tag.

Comment #113

plachThis implements the first part of the work, see the (upcoming) updated issue summary. I am now going to start phase 2 ;)

Comment #114

plachComment #115

plachComment #116

plach@fago:

The latest patch addresses most of your review. Some replies:

Comment #117

plachBetter title

Comment #118

plachEven better

Comment #119

plachComment #120

sunUgh. I wasn't aware that the result of the Entity Schema effort was going to retry a reinvention of Data API ad-hoc and 5 minutes to midnight, even though there's plenty of past evidence to prove that the approach simply doesn't work out for more complex data types in SQL storages.

Likewise, for NoSQL storages, we're talking about either (1) excessive mass-updates of all stored items, or (2) application code that has backwards-compatibility layers baked in, so as to be able to understand data/properties/values in different formats.

What exactly did we sign up for by committing the entity/field schema abstraction?

Comment #121

xjmFYI, "Five minutes to midnight" is an unreasonable characterization as these issues have been beta-blocking since December (and #2183231: Make ContentEntityDatabaseStorage generate static database schemas for content entities was committed on June 1).

Comment #122

berdirThanks @xjm :)

I experimented a bit with this with file_entity and managed to use it to add the type/bundle field: https://github.com/md-systems/file_entity/commit/8bd8e27f2cd5e488edba4bf...

I had one problem and that is that the bundle field is NOT NULL and I need an 'initial' value. I experimented with supporting that automatically, it's very simple but a bit random, see 8.x-et-support-initial-1498720-berdir. Not saying you should include it, just putting it out as an idea. Would certainly need tests and documentation. As an alternative, I could override the schema handler through a custom storage class and add the initial there.

Comment #123

chx commentedI thought massive changes are going to be handled by the migrate system ; just add D8 source classes to taste?

Comment #124

plach@sun:

TBH I am a bit surprised by your comment: as usual, I am completely open to discuss technical stuff, but your comment seems to imply I am trying to sneak in this huge change last-minute without prior discussions. Actually it's the exact opposite:

To sum up, I think I did everything I could to advertise my goals in general and this issue's goals in particular. If that was not enough, I am sorry, but it wasn't certainly due to bad will. The only reason I started working on this so late is that there was an almost infinite list of prerequisite issues to address before it.

That said, thursday 6pm CEST we are going to have the usual #drupal-entity meeting with most key people in this area: you'd be welcome to join us so we can discuss your concerns.

@Berdir:

Your code looks certainly promising, if there are no major objections I'd see it as a valid solution.

@chx:

Not sure whether you are referring to @Berdir's comment or this issue in general, I will assume the latter: the plan for core is limiting schema changes to those not-implying data migrations, e.g. the usual field table addition. The service responsible for managing changes could be swapped in contrib with an implementation relying on the Migrate API to deal also with changes that involve data migrations.

Comment #125

plachRestoring new version

Comment #126

yched commentedThe case of "initial" is a bit tricky.

- it only applies to the case where you're adding a new field stored in the base table, where there is already one row per existing entity. When used on a field stored in a dedicated table, supporting an initial value would mean inserting potentially 1000s of new rows.

- the "initial value" is not inherent to the field type, but very much case-by-case (it's the code / person that adds the new field that knows the correct initial value for that new field specifically).

To me, the above says "not part of the field type schema, not handled by the field storage layer, belongs to migrations".

Comment #127

fagoI'm not into bikesheeding, but I'd like to understand why one allows and the other requires the storage "approach". Is requiresDedicatedStorage() stronger? (Its docs seem to be wrong as they are from shared storage.).

Looks like this has been addressed, thanks :)

Comment #128

xjmWe discussed this issue in the IRC meeting yesterday (including @fago, @berdir, @effulgentsia, @plach, @yched, @swentel, and @alexpott). Conclusions:

Comment #129

joachim commentedIt's a long time since I've worked on Data module...

What I do remember is that has a hideous circularity problem that I never properly managed to fix. I don't recall any of the details, but the comments I put in the code at the time can do that for me ;)

So it looks like the big problem was that Data table definitions are exportable with CTools. Also, Data needs to use hook_schema_*alter*() because it's drawing from data that's in a table. With the D8 config system that won't be a problem at least.

Comment #130

berdirentity tables aren't exposed to drupal_get_schema() at all (nor are field tables), so anything related to that can't be an issue :)

Comment #131

chx commentedI still don't get it. Now I read the IS. Why we are not telling people to just add configurable fields?? Why put all this effort to store things in the base table? Between that and migrate, I really don't get why is this necessary.

Comment #132

joachim commentedWould be a good idea to also ping the maintainer of ECK, which deals with an almost identical situation to Data.

Comment #133

Anonymous (not verified) commented@chx IMHO a) to save unnecessary joins to additional field tables, b) mostly base fields of an entity are single valued so it's easy to just open the entity field data table and immediately see all values instead of "hunting" the values in different field tables(DX).

The b) point for me is very important but I guess moving entirely to Fields would save us quite a bit of coding(base field - definiton + display settings + form settings + all the code in core that handles base fields), no field would be outside of configuration, etc... so IMHO I'd agree with such approach.

Comment #134

plach@joachim:

Thanks for your feedback! As @Berdir was point out, we are no longer exposing the schema array through

hook_schema()so that issue should not affect us. Moreover atm I am trying to spot differences that might trigger schema changes by just inspecting entity type/field schema definitions, so that schema array should not be a concern at least in this basic implementation. I am envisioning a contrib module that could expand on the core behavior and provide schema diffs, change previews and similar cool stuff, but that would totally rely on existing code/libraries. Anyway, I pinged @fmizzell as you suggested :)@chx:

Well, actually denormalization in particular and performance in general are some of the biggest reasons I have in mind. Certainly we are still supporting the ability to add configurable fields via code, actually it's even possible to define bundle fields via code and they would get a dedicated field table, exactly as configurable fields. Adding stuff to the base table(s) is just another option that I think may be quite useful in certain situations. I must say that I'd very much prefer to use base fields defined in code (instead of bundle ones), though. I've always been disturbed by the fact that a configurable field, even one added in code and locked, is somehow "unreliable" and basing coded business logic on it feels just wrong to me. A possible option to make it easier to add base fields in scenarios where data is already available, could be to expose a way to specify that a certain field should be stored in a dedicated table even if it's a single-value base field.

Comment #135

fmizzell commentedI haven't looked at D8's entity stuff since the time when typed data was being discussed, so I am not sure that looking at the patch and trying to give feedback would be that helpful at all, but it does seem that @platch envisions a contrib module that could offer a UI to trigger creation and deletion of base fields, and that is exactly what ECK is doing in D7. So as long as that is possible ECK will be able to work with the core system.

Comment #136

plach@fmizzell:

Thanks! Is there any experience you'd like to share (tricky stuff, good solutions) that could be useful to design the D8 system?

Comment #137

fmizzell commentedIn ECK I am lucky to have almost full control of how base field's metadata gets manipulated (A user could modify the serialized array in the db, but why would you when there is an API?). Adding a new property/base field looks something like this:

So I can record manipulations that need to be performed to existing db tables and perform them during save. I am guessing that in D8 a lot of this metadata is in code or in the config system, so assuming that people is going through an API might be out of the question.

Knowing that users will go through an API eliminates the need for schema versions and other historical data (which makes everything much simpler), but on the other hand, this issue is more ambitious than just adding/deleting base fields, and saving historical information like diffs will be necessary anyways to help out with auto-migration features, etc.

Comment #138

plach@fmizzell:

#137 makes a lot of sense to me. As you correctly point out, the main difference in D8 is that Entity Field CRUD operations are not observable, at least at the moment, so what we need to do to detect changes is storing a copy of entity type and field storage definitions and comparing them with the ones currently available in the system. This has also one advantage though: such operation can be performed whenever we want and allows for multiple changes to be applied in a single run.

Anyway, as discussed with @yched elsewhere, we should probably ensure the Entity Manager handles all this stuff and the storage is just an event subscriber or something like that. However we can probably implement that approach as an API addition, so no need to tackle that here.

Comment #139

chx commentedYou need to realize that I have no bones in this -- mongodb will store whatever is the current field schema. As there's no database schema, for all it cares every document in a collection can be completely different. So from that perspective, I shouldn't care about this issue.

My concern is timing and resources and maintainability of the entity storage code. Please think carefully: is this really a release blocking task? Isn't it merely a feature? Shipping a field config with a module should be quite reliable AFAIK; it's already built and working.

Comment #140

fagoWhile performance benefits are great to have, I think the main reason for us having to do this is something else: So far entity types have been able to do updates to their entity base tables by writing the schema update operations theirselves. With the schema being generated, this is not possible anymore. Instead we need this API to continue to have the possibility for entity type providing modules to make changes to their base fields / base table schema.

Comment #141

gábor hojtsyComment #142

fagook, I looked through the latest version of the patch (branch 8.x-et-entity_schema_handling-1498720-plach) in detail now. In general, this looks great. Adding an updated version with some small documentation stuff and glitches fixed.

Here some remarks/questions:

I do not understand why the array_merge() order differs here. So this could use a comment / some explanation.

Given #2275659: Separate FieldableEntityInterface out of ContentEntityInterface it's actually not bound to content entities, but fieldable entities. I'm not sure whether it should be FieldableEntitySchemaHandlerInterface or better just "EntitySchemaHandlerInterface" + type hint on fieldable entity interface later on and ContentEntityInterface for now.

Yep, but we do not generally know which changes influence the table mappings and which don't. So shouldn't we just re-compute the mapping on every change?

Shouldn't those changes be in the SchemaHandler as well? The name and description of the schema handler implies that is used for dedicated fields as well. If we want to keep implementations separated, I'd suggest moving it to a trait and use the trait in the schema handler?

CommentSchemaHandlerThis one seems to be unused?

Is there a reason it checks the implementation and not on the sql storage interface?

oh, yes :)

Comment #143

fagoDoes it check whether the entity has data somewhere? I'm missing it.

Also, it would make sense to handle simple cases e.g. column additions and removals without migration / data loss I think? -> We do :)Comment #145

plachHere's my current work: part2 still needs work, and I realized also part1 needs a bit of additional work to handle index/keys changes, but this is definitely looking more like it. Not sending to the bot yet, but manual testing here looks promising (see https://twitter.com/plach__/status/497797333015601153). Adding some screenshots of the UI tweaks.

(this does not include @fago's changes, just saw them)

Comment #146

plachComment #147

plachComment #148

fagoThanks!

This misses the storage class check although commented - it should probably just call the requiresChanges method for now as it's the same in case of entity data? Or do we handle going from revisionable to not revisionable without migration?

So this makes the base system supported schema changes instead of letting the entity storage handle changes. As update.php list schema changes separately, it makes sense - but still hard-codes entity storage changes to be schema changes only.

Not sure whether that's a use-case, but should we continue routing change notification calls through the storage, such that a non-SQL storage could implement its own of storage adaptions as needed?

Then, the SQL storage could forward calls to the schema manager service internally. Thoughts?

Other storage engines might not have to do schema changes, but still e.g. data cleanup, changing indexes etc. might be interesting. Then who knows what sort of storage implementation people will come up with?

If we do this, the question is how we deal with the summary in update.php. I could see us to just continue to hard-code the summary to schema changes. Even if other storages cannot provide a summary, avoiding to locking them out of storage changes seems reasonable.

Comment #149

plachThis should address @fago's reviews (thanks :). Attached you can find also the interdiff between #142 and #145, which probably @fago missed.

I've been asking myself more or less the same questions (here and in other places): I think for now it safer to target the most specific scenario (just content entity types). We can discuss the details and "widen" the target safely, the opposite strategy would imply API breaks.

Fair point. But we can bring it even further and say that the storage should make no assumption on what is needed to reflect the change in the schema, so ideally it should be responsibility of the schema handler to instantiate a fresh table mapping. For now I just added a todo about removing those lines when we are done with #2274017: Make SqlContentEntityStorage table mapping agnostic .

I don't think that code belongs to the schema handler: the storage handles field data, while the schema handler deals with schema. IMHO this way the overall (CR)AP strategy is correctly split into its two respective areas of responsibility.

What do you mean? :)

It's overriding the

getEntitySchema()method as usual.Well, the current implementation hardcodes assumptions on the table layout that are specific to the core implementation provided by

ContentEntityDatabaseStorage. I think we can get rid of this entirely in #1740492: Implement a default entity views data handler.Validation is being implemented in part2 atm. My current approach is leaving the possibility to override data handling policies in the schema manager. The storage layer just exposes new methods to describe how/if the change affects data. This part could use some IRC discussion :)

Yep, we just need to drop revision tables. Added a comment to clarify that.

This is an area I spent quite some time thinking around: IMO ideally all these notifications should be routed through the entity manager, which in turn would be responsible for notifying interested entity handlers (probably only storage) and for proxying the notifications to other interested business objects, such as the schema manager. Among the rest this would allow the EM to clear its own caches as discussed with @yched in #2144263-87: Decouple entity field storage from configurable fields.

I agree the current approach is not the cleanest possible, but it is still an improvement wrt the HEAD code (although the interdiff looks worse) and it ensures that any change to definitions is persisted in state after being reflected in the storage. In fact every call is initially proxied to the storage, so we are losing nothing in terms of the use cases we are able to support.

I think we should clean this up in a dedicated issue: we can definitely implement the plan above without introducing relevant API changes.

Comment #150

plachComment #151

plachSorry, patch in #149 contains only part1 while it was meant to contain both. Here is the complete one. Interdiffs above are correct. Attached the difference between #149 and #151.

Comment #152

xjmLooks like the summary could use an update. :) Is that the full implementation of part 2?

Comment #153

plachI am not sure what to update, I think I followed strictly what is proposed in the summary.

Comment #154

gábor hojtsyI think the biggest question is are phase1 and phase2 both part of this issue intended to commit? What are missing pieces?

Comment #155

plachPart 1 and part 2 are split just for reviewer convenience. We agreed they should be brought on together. Then maybe we can commit them separately if that's the easier way to get this in, but they should be set to RTBC together.

I am still coding a few bits, but we should be close. Namely:

throw) is a totally non API breaking change.Additionally, I am wondering whether we should add a method to reconcile the definitions stored in state with the ones available in code. This would allow people to apply changes manually and just notify the schema manager that everything is fine again.

Comment #156

fagoGreat work, we've come along way already! Here a another review, I've not gone into details really, tried to stay more on the bigger picture for now.

hm, reading this again I'm wondering what an example of a change in the storage class would be, which would lead to a different schema being generated?

I guess it boilds down to table mapping changes, so maybe we can add a todo to compare the generated table mappings? I guess changing the class would be something you'd like to be able to do without being force into some migrations ;-)

Yes, but updateEntitySchema() seems to drop the schema and re-add it on the entity level - so there is still entity data migration required? It just handles dedicated revision tables better?

hm, yet another object with those event listeners. Should we start proxying all of them via the manager already as part of this issue?

It would make more sense to me to have the storage notified from the manager and not from the schema managaer as now, as there might be storages completely unrelated to schema. E.g. a site running mongodb might want something like a null schema handler (or even remove the service if possible later on).

Related, I'm not sure the SchemaManager should be the one who stores the definition changes into state. That does not directly seem to be related to schema managed, as it will be required to figuring out definition changes in general to which storages should be able to react - howsoever they need to do that. Maybe, mongo just needs to drop/clean some data?

So what about moving this to the EntityManager?

I see, good argument - but isn't it actually the other way round? E.g. changing an argument from FieldableInterface to ContentEntityInterface would not break things, but the other way round it would be broken as you narrow down the interface?

True, ok.

I'd not say this is traditional data that you saved as it's a consequence of the field definition change; i.e. it's a metadata/schema change not a data change. But as the storage class needs to have knowledge about the 'deleted' column anyway, it doesn't seem to matter much where it lives.

Nope? Not in the previous version I reviewed, nor in the latest one. Other storages override schemaHandler() to register it, but CommentStorage doesn't ?

I see, yeah let's deal with that over there.

Yep, I've been thinking about that as well. Given all the various reactions in different classes I've been wondering whether it makes sense to start making use of symfony events for that also. It should be possible to introduce without an API break though if we continue to call pre-existing listener methods. Proxying them through the storage for now is fine imo.

If there is only one possible value, having the constant seems superfluous ? Why not just set it to TRUE? Or better, just have general constants for CREATED, UPDATED and DELETED which we can re-use independent of what has been created|updated|deleted ?

How would I notice an aborted operation? Does it throw an exception? If so, that misses docs.

Comment #157

effulgentsia commentedSorry to be joining the review party so late (17 days since #128.3 was written). I started trying to absorb the patch, and the first thing that I got stuck on is:

Looks like this patch splits every content entity storage handler into a custom storage handler + a custom schema handler, but are we sure we want to require that split in every case? I support the split at the base class level (ContentEntityDatabaseStorage + ContentEntitySchemaHandler) and making it possible to subclass each one separately, but as a default case, would it be better DX for

ContentEntityDatabaseStorage::getEntitySchema()to call back into$this->storage->adjustSchema()(pending better name), so that most content entity types can get away with only implementing the one storage class unless they need a custom schema handler for more exotic reasons?Comment #158

effulgentsia commentedWhy does this need to be its own interface? Why not add the new methods to TableMappingInterface? I don't see where there's code that benefits from a narrower TableMappingInterface that lacks the new methods.

At least based on the examples in core, would

optimizeSchema()be a good name for this? If I'm reading it right, then the base class (ContentEntitySchemaHandler) generates a functionally correct schema, but what's left as a per-entity-type responsibility is adding indexes and setting not-null constraints (primarily to benefit indexes), so I think "optimize" would be a decent name for that, but open to other suggestions.Finally, neither #151 nor #2298525-48: Test issue for Make ContentEntityDatabaseStorage handle changes in the entity schema definition apply cleanly to HEAD anymore. Would it be possible to get an updated patch posted once HEAD changes are merged back into the sandbox?

Comment #159

plach@fago:

1: One example is the contact_message entity: it currently has null storage, but we have tests where a regular storage class is swapped in. We need to generate the schema in that case.

2: Yep, still working on that, as part of the entity keys/indexes stuff.

3: Unless every (content) entity type is stored in Mongo, I don't think a null schema manager would make sense. Anyway, I think the switch to the EntityManager as the class responsible for notifying (actually proxying notifications) about entity/field definition changes could deserve it's own issue, as I think there a few aspects to figure out that are not completely trivial. Personally I'd avoid rushing it in here, but if there's consensus we should be addressing that now, fine by me. What we could end-up with is a scenario where the EntityManager actually stores data in state and tracks changes in definitions and the schema manager receives notifications about those and interacts with the status report and the storage class to actually apply them. The only reason why I am currently routing calls through the schema manager is to ensure definitions are properly stored in state.

4: If we restrict the API to

ContentEntityInterfaceand then we decide to "relax" it to supportEntityInterfacewe break no existing code. I am not sure where aFieldableInterfacewould fit in a hypothetical hierarchy, but I'd guessContentEntityInterfacewould extend it, so the same reasoning would apply.6: I agree it's metadata. Currently what determines the decision of putting some code in the storage or in the schema handler is: if code touches table records it goes in the storage, otherwise in the schema handler.

7: Sorry, I missed what you meant.

9: Yep, this looks very event-ish to me too :)

10: Good point.

11: It is supposed to throw an exception, if not I will add it and document it :)

@effulgentsia:

This has a double reason:

Looking at core implementations, with the only exception of block content, every entity type that needs a specific schema handler needs also a specific storage, so I don't think this is a big DX burden. If it turns out I am wrong, we can try to refactor things so that the schema handler is injected in the storage class, but that would prevent lazy loading, which would be bad since the schema handler is a big class that is used very rarely.

Comment #160

plach@effulgentsia:

Because this is the specific implementation for core storage: other storage classes might want to provide completely different ways to deal with SQL tables and implement completely different table layouts (see also #2274017: Make SqlContentEntityStorage table mapping agnostic ). In that case the concepts of dedicated/shared tables would be meaningless.

I am completing a couple of changes and then I will merge head. Hopefully it won't be too painful :)

Comment #161

effulgentsia commentedHow? $storage is a constructor dependency of ContentEntitySchemaHandler, and is used already within ContentEntitySchemaHandler::getEntitySchema(). All I'm saying is that at the end of that implementation, call

$this->storage->optimizeSchema($schema). That doesn't add any additional object dependency that isn't already there.Is that really a benefit? If a particular entity type's storage class extends ContentEntityDatabaseStorage, then it's already bound to the concept that some kind of schema exists, so outside code knowing that an optimizeSchema() method can be called on it isn't very harmful, is it? I actually think that would be a legitimate method to define in SqlEntityStorageInterface. Note, outside code couldn't get a schema from it, it would need to pass one in and let the method modify it (i.e., add indexes).

I don't think this would change that. The consuming code you're talking about would still purely act on ContentEntitySchemaHandlerInterface exactly the same as now. It's only that the ContentEntitySchemaHandler implementation of the protected getEntitySchema() method would interact with the storage object it already has access to.

Ah, thanks for that explanation. In that case, should getReservedColumns() and getFieldColumnName() move to TableMappingInterface? Those seem generic to me rather than bound to any particular layout (dedicated/shared) strategy.

Comment #162

plachWell, the plan for #2274017: Make SqlContentEntityStorage table mapping agnostic is making the schema handler depend only on the table mapping class, by making the latter encapsulate the logic that is currently located in

ContentEntityDatabaseStorage::getTableMapping(). This would allow to instantiate a table mapping class wherever needed. However I just realized we are currently using thehasData()andcountFieldData()methods from the storage, so I guess we won't be able to make the schema handler completely independent from the storage.Anyway, personally I find handling schema only in the schema handler cleaner, but if there's consensus that the current DX is too bad (I definitely don't think so) your proposal is probably the best way forward.

Comment #163

plachActually any table layout change implies a data migration... updated summary.

Comment #164

plachThis addresses bullets 2, 7, 10, 11 of #156. The interdiff does not include 2 as I did quite a few merges meanwhile. I am waiting feedback on the other bullets. If we decide to address #156.3 in a separate issue, then I think we are ready to split this in sub-issuess as @eff proposed. I still have a couple issues on my todo list and there's still #161 to be discussed, but I think none of them would imply cross-issue changes, so we should be fine.

@effulgentsia:

If you want to proceed with the split, please use my sandbox and create a separate branch for each sub-issue, branching the dependent ones off the independent ones, so I can perform cross-branch changes if needed.

Comment #165

plachComment #166

plachSorry, I missed this one:

Well, not sure whether

getReservedColumns()is still needed, but it's tied to an implementation detail of field tables. However I could see the value of moving those in the base interface. I will do it on the next reroll.Comment #167

plachTagging for the rocketship.

Comment #168

effulgentsia commentedOk, will do when I get a chance (probably Monday). For now, just testing out the first part in #2326719: Move pseudo-private table mapping functions from ContentEntityDatabaseStorage to public API of DefaultTableMapping.

Comment #169

effulgentsia commentedThe issue in #168 is green, if anyone wants to review/rtbc that :)

Additionally, I opened #2326949: Move entity-type-specific schema information from the storage class to a schema handler, which is now also green, so let's make the decision there regarding #157/#162. I'm ok with that getting RTBC'd and committed if no one else objects to the DX of needing an extra class.

And, I opened #2326981: Move \Drupal\field\FieldException to \Drupal\Core\Field\FieldException, which is super simple, so can hopefully land very fast.

All 3 of those do not overlap at all, so can land in any order. So, I'm attaching a patch here that is the same as #164, but rebased on top of those 3, in case it helps any other reviewers focus on what's not covered by those issues.

Per #168, I'll commit all these as branches to the sandbox tomorrow, if no one beats me to it.

Comment #170

plachI updated the Remaining tasks section of the issue summary with the new issues. Please add the new ones there too.

Comment #171

effulgentsia commentedI setup the sandbox branches:

Comment #172

effulgentsia commentedOpened another child issue: #2330121: Replace ContentEntityDatabaseStorage::getSchema() with a new EntityTypeListenerInterface implemented by SqlContentEntityStorageSchema. Reviews there would be much appreciated!

Comment #173

xjmThanks for the great work separating it out into scoped steps.

Comment #174

effulgentsia commented#2330121: Replace ContentEntityDatabaseStorage::getSchema() with a new EntityTypeListenerInterface implemented by SqlContentEntityStorageSchema is almost RTBC.

The step after that is #2333113: Add an EntityDefinitionUpdateManager so that entity handlers can respond (e.g., by updating db schema) to code updates in a controlled way (e.g., from update.php).

Comment #177

plachComment #178

plach@eff splitted a small one (#2338873: Modules providing non-configurable field storage definitions can be uninstalled, leaving orphaned unpurged data) out of #2337927: SqlContentEntityStorage::onFieldStorageDefinition(Create|Update|Delete)() is broken for base fields as it really did not fit there.

Comment #179

plach#2338873: Modules providing non-configurable field storage definitions can be uninstalled, leaving orphaned unpurged data is not part of this meta, sorry for the noise.

Comment #180

effulgentsia commented#2337927: SqlContentEntityStorage::onFieldStorageDefinition(Create|Update|Delete)() is broken for base fields is now the final beta blocking child of this meta issue, so moved the "beta blocker" tag to there.

Comment #181

plach#2337927: SqlContentEntityStorage::onFieldStorageDefinition(Create|Update|Delete)() is broken for base fields is in, so I guess we can call this fixed now, thanks everyone for the amazing work!

Comment #182

dawehnerLet's ensure that we don't forget views: #2341323: Adapt the references field / table names in views, when corresponding entity schema changes

Comment #183

plachRe-adding tag

Comment #185

gábor hojtsyComment #186

xjmThe change record was not completely updated for these changes. I opened #2507879: [no patch] Change record for automatic entity schema updates needs updating.