Support for Drupal 7 is ending on 5 January 2025—it’s time to migrate to Drupal 10! Learn about the many benefits of Drupal 10 and find migration tools in our resource center.

Support for Drupal 7 is ending on 5 January 2025—it’s time to migrate to Drupal 10! Learn about the many benefits of Drupal 10 and find migration tools in our resource center.Learning to rank or machine-learned ranking (MLR) is the application of machine learning, typically supervised, semi-supervised or reinforcement learning, in the construction of ranking models for information retrieval systems. Training data consists of lists of items with some partial order specified between items in each list. This order is typically induced by giving a numerical or ordinal score or a binary judgment (e.g. "relevant" or "not relevant") for each item. The ranking model's purpose is to rank, i.e. produce a permutation of items in new, unseen lists in a way which is "similar" to rankings in the training data in some sense. (https://en.wikipedia.org/wiki/Learning_to_rank)

As the client is usually going back and forth with the development company for small tweaks, every change you make as a developer to the search boosting requires a full check on all the other search terms. This needs to be done to make sure the boosting you are introducing doesn’t impact other search terms. It’s a constant battle - and it’s a frustrating one. Even more so because in the above scenario the result that was displayed at the top wasn’t the one that the client wanted to show up in the first place. In the screenshot you can see that for this particular query, the most relevant result according to the customer is only ranked as number 7. We’ve had earlier instances where the desired result wouldn’t even be in the top 50!

This module adds generic support to Search API with specific support for Search API Solr to select a model. It allows other backends to step in as well, as long as they support the feature and implement the interface.

Requirements

Requires search_api_solr latest dev version.

Requires Ranklib Jar file or a Hosting Provider that supports this like Dropsolid Platform. (https://dropsolid.com)

Requires Solr to be configured for LTR support or a Hosting Provider that provides Solr with LTR enabled like Dropsolid Platform. (https://dropsolid.com)

How to train the model?

- Add the training view field to a view where you allow training to happen. See the screenshot how this could look like.

- Fill in some searches and click the relevancy buttons below each result. You don't need to rank them all.

- Once you've trained some results execute the training drush command (see below)

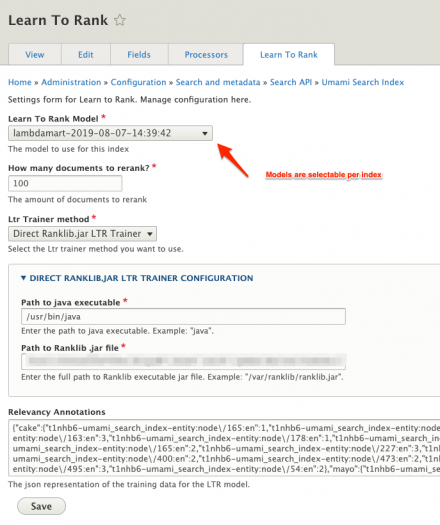

- Your model will be trained and will be uploaded to Solr. Then you can go to the index settings page and select the model that was just trained. It will have the name lambdamart-

- Execute the same searches and see the reranking to happen without performance loss.

Training Drush Command

drush8 ltr-train <view_id> <view_display_id>

drush8 ltr-train search page_1

Documentation & Resources

A detailed explanation can be found at https://dropsolid.com/en/blog/machine-learning-optimizing-search-results...

Documentation how it can be used in Solr: https://lucene.apache.org/solr/guide/7_7/learning-to-rank.html

Documentation how it can be used in ElasticSearch: https://elasticsearch-learning-to-rank.readthedocs.io/en/latest/

Note: This is not available in a stable version in ElasticSearch, I recommend you to stick to Solr unless you really know the implications towards the hosting side of things. As quoted from its documentation: (It’s expected you’ll confirm some security exceptions, you can pass -b to elasticsearch-plugin to automatically install"

Roadmap

Right now there are no analytics available in the module yet. We're thinking how to best integrate this in the module or in the Dropsolid Platform.

Project information

Seeking new maintainer

The current maintainers are looking for new people to take ownership.- Module categories: Site Search

- Ecosystem: Search API

- Created by Nick_vh on , updated

Stable releases for this project are covered by the security advisory policy.

There are currently no supported stable releases.